The KubeVela 1.7 version has been officially released for some time, during which KubeVela has been officially promoted to a CNCF incubation project, marking a new milestone. KubeVela 1.7 itself is also a turning point because KubeVela has been focusing on the design of an extensible system from the beginning, and the demand for the core functionality of controllers has gradually converged, freeing up more resources to focus on user experience, ease of use, and performance. In this article, we will focus on highlighting the prominent features of version 1.7, such as workload takeover and performance optimization.

Taking Over Your Existing Workloads

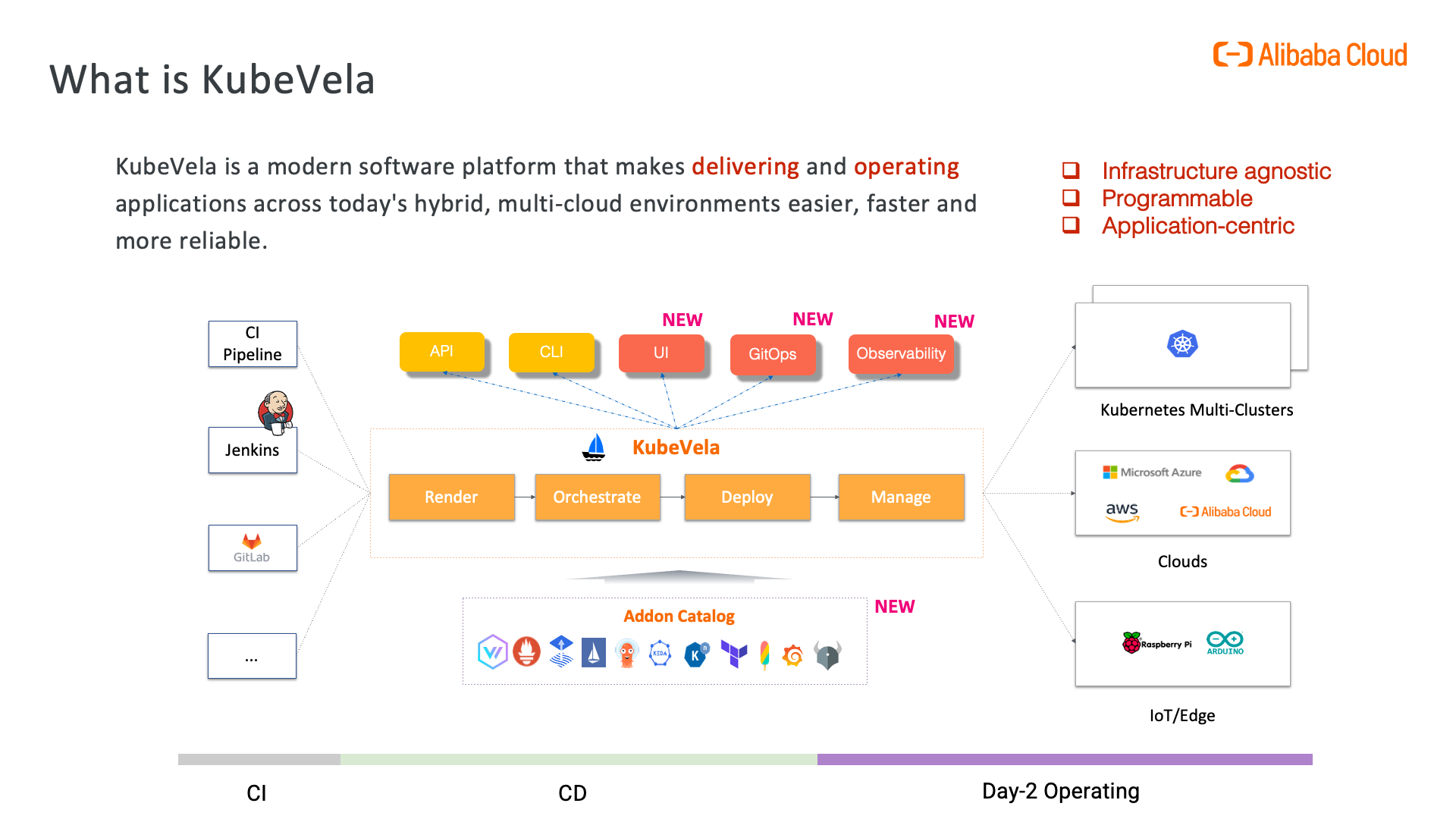

Taking over existing workloads has always been a highly demanded requirement within the community, with a clear scenario: existing workloads can be naturally migrated to the OAM standard system and be managed uniformly by KubeVela's application delivery control plane. The workload takeover feature also allows reuse of VelaUX's UI console functions, including a series of operations and maintenance characteristics, workflow steps, and a rich plugin ecosystem. In version 1.7, we officially released this feature. Before diving into the specific operation details, let's first have a basic understanding of its operation mode.

"read-only" and "take-over" policy

To meet the needs of different usage scenarios, KubeVela provides two modes for unified management. One is the "read-only" mode, which is suitable for systems that already have a self-built platform internally and still have the main control capability for existing businesses. The new KubeVela-based platform system can only observe these applications in a read-only manner. The other mode is the "take-over" mode, which is suitable for users who want to directly migrate their workloads to the KubeVela system and achieve complete unified management.