KubeVela 1.5 was released recently. This release brings more convenient application delivery capabilities to the community, including system observability, CloudShell terminals that move the Vela CLI to the browser, enhanced canary releases, and optimized multi-environment application delivery workflows. It also improved KubeVela's high extensibility as an application delivery platform. The community has started to promote the project to the CNCF Incubation stage. It has absorbed the practice sharing of multiple benchmark users in many community meetings, which proves the community's healthy development. The project is now mature to some extent, and its adoption has made periodical achievements, thanks to the contributions of more than 200 developers in the community.

The Canary Rollout of the Helm Chart Application Is Coming!

Background

Helm is an application packaging and deployment tool of client side widely used in the cloud-native field. Its simple design and easy-to-use features have been recognized by users and formed its ecosystem. Up to now, thousands applications have been packaged using Helm Chart. Helm's design concept is very concise and can be summarized in the following two aspects:

Packaging and templating complex Kubernetes APIs and then abstracting and simplifying them into small number of parameters

Giving Application Lifecycle Solutions: Production, upload (hosting), versioning, distribution (discovery), and deployment.

How to migrate Existing Terraform Cloud Resources to KubeVela

You may have learned from this blog that we can use vela to manage cloud resources (like s3 bucket, AWS EIP and so on) via the terraform plugin. We can create an application which contains some cloud resource components and this application will generate these cloud resources, then we can use vela to manage them.

Sometimes we already have some Terraform cloud resources which may be created and managed by the Terraform binary or something else. In order to have the benefits of using KubeVela to manage the cloud resources or just maintain consistency in the way you manage cloud resources, we may want to import these existing Terraform cloud resources into KubeVela and use vela to manage them. But if we just create an application which describes these cloud resources, the cloud resources will be recreated and may lead to errors. To fix this problem, we made a simple backup_restore tool. This blog will show you how to use the backup_restore tool to import your existing Terraform cloud resources into KubeVela.

Helm Chart Delivery in Multi-Cluster

Helm Charts are very popular that you can find almost 10K different software packaged in this way. While in today's multi-cluster/hybrid cloud business environment, we often encounter these typical requirements: distribute to multiple specific clusters, specific group distributions according to business need, and differentiated configurations for multi-clusters.

In this blog, we'll introduce how to use KubeVela to do multi cluster delivery for Helm Charts.

If you don't have multi clusters, don't worry, we'll introduce from scratch with only Docker or Linux System required. You can also refer to the basic helm chart delivery in single cluster.

Glue Terraform Ecosystem into Kubernetes World

If you're looking for something to glue Terraform ecosystem with the Kubernetes world, congratulations! You're getting exactly what you want in this blog.

We will introduce how to integrate terraform modules into KubeVela by fixing a real world problem -- "Fixing the Developer Experience of Kubernetes Port Forwarding" inspired by article from Alex Ellis.

In general, this article will be divided into two parts:

- Part.1 will introduce how to glue Terraform with KubeVela, it needs some basic knowledge of both Terraform and KubeVela. You can just skip this part if you don't want to extend KubeVela as a Developer.

- Part.2 will introduce how KubeVela can 1) provision a Cloud ECS instance by KubeVela with public IP; 2) Use the ECS instance as a tunnel sever to provide public access for any container service within an intranet environment.

OK, let's go!

KubeVela 1.4 released, Make Application Delivery Safe, Foolproof, and Transparent

KubeVela is a modern software delivery control panel. The goal is to make application deployment and O&M simpler, more agile, and more reliable in today's hybrid multi-cloud environment. Since the release of Version 1.1, the KubeVela architecture has naturally solved the delivery problems of enterprises in the hybrid multi-cloud environments and has provided sufficient scalability based on the OAM model, which makes it win the favor of many enterprise developers. This also accelerates the iteration of KubeVela.

In Version 1.2, we released an out-of-the-box visual console, which allows the end user to publish and manage diverse workloads through the interface. The release of Version 1.3 improved the expansion system with the OAM model as the core and provides rich plug-in functions. It also provides users with a large number of enterprise-level functions, including LDAP permission authentication, and provides more convenience for enterprise integration. You can obtain more than 30 addons in the addons registry of the KubeVela community. There are well-known CNCF projects (such as argocd, istio, and traefik), database middleware (such as Flink and MySQL), and hundreds of cloud vendor resources.

In Version 1.4, we focused on making application delivery safe, foolproof, and transparent. We added core functions, including multi-cluster permission authentication and authorization, a complex resource topology display, and a one-click installation control panel. We comprehensively strengthened the delivery security in multi-tenancy scenarios, improved the consistent experience of application development and delivery, and made the application delivery process more transparent.

Trace and visualize the relationships between the kubernetes resources with KubeVela

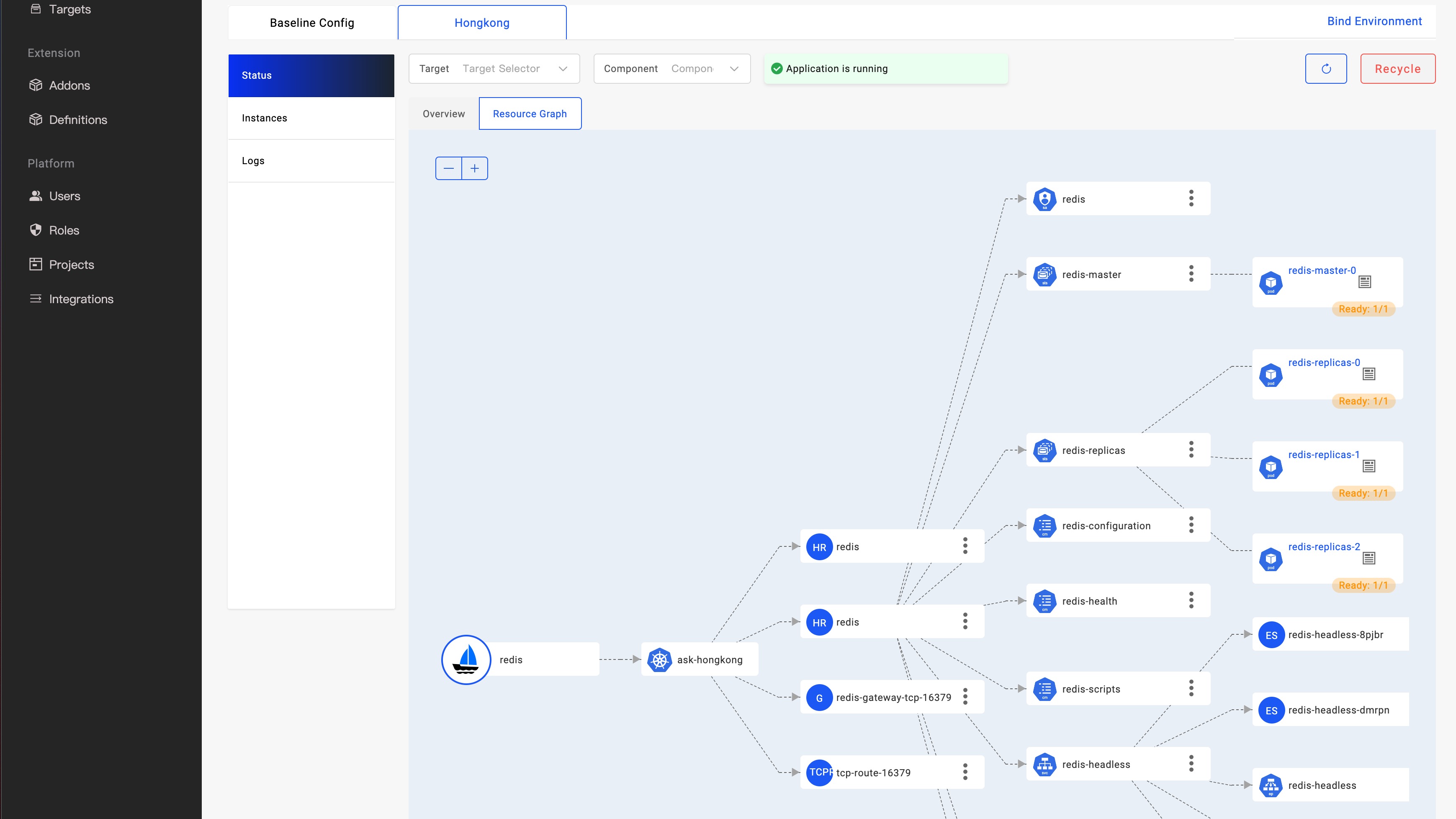

One of the biggest requests from KubeVela community is to provide a transparent delivery process for resources in the application. For example, many users prefer to use Helm Chart to package a lot of complex YAML, but once there is any issue during the deployment, such as the underlying storage can not be provided normally, the associated resources are not created normally, or the underlying configuration is incorrect, etc., even a small problem will be a huge threshold for troubleshooting due to the black box of Helm chart. Especially in the modern hybrid multi-cluster environment, there is a wide range of resources, how to obtain effective information and solve the problem? This can be a very big challenge.

As shown in the figure above, KubeVela has offered a real-time observation resource topology graph for applications, which further improves KubeVela's application-centric delivery experience. Developers only need to care about simple and consistent APIs when initiating application delivery. When they need to troubleshoot problems or pay attention to the delivery process, they can use the resource topology graph to quickly obtain the arrangement relationship of resources in different clusters, from the application to the running status of the Pod instance. Automatically obtain resource relationships, including complex and black-box Helm Charts.

In this post, we will describe how this new feature of KubeVela is implemented and works, and the roadmap for this feature.

Build flexible abstraction for any Kubernetes Resources with CUE and KubeVela

This blog will introduce how to use CUE and KubeVela to build you own abstraction API to reduce the complexity of Kubernetes resources. As a platform builder, you can dynamically customzie the abstraction, build a path from shallow to deep for your developers per needs, adapt to growing number of different scenarios, and meet the iterative demands of the company's long-term business development.

KubeVela v1.3 released, CNCF's Next Generation of Cloud Native Application Delivery Platform

Thanks to the contribution of hundreds of developers from KubeVela community and around 500 PRs from more than 30 contributors, KubeVela version 1.3 is officially released. Compared to v1.2 released three months ago, this version provides a large number of new features in three aspects as OAM engine (Vela Core), GUI dashboard (VelaUX) and addon ecosystem. These new features are derived from the in-depth practice of many end users such as Alibaba, LINE, China Merchants Bank, and iQiyi, and then finally become part of the KubeVela project that everyone can use out of the box.

Pain Points of Application Delivery

So, what challenges have we encountered in cloud-native application delivery?

Easily Manage your Application Shipment With Differentiated Configuration in Multi-Cluster

Under today's multi-cluster business scene, we often encounter these typical requirements: distribute to multiple specific clusters, specific group distributions according to business need, and differentiated configurations for multi-clusters.

KubeVela v1.3 iterates based on the previous multi-cluster function. This article will reveal how to use it to do swift multiple clustered deployment and management to address all your anxieties.